OpenClaw Thinking and PineClaw Product Practice

(This article is adapted from a live talk at the Gaorong Ronghui “New Agent Paradigm” series event on March 7, 2026.)

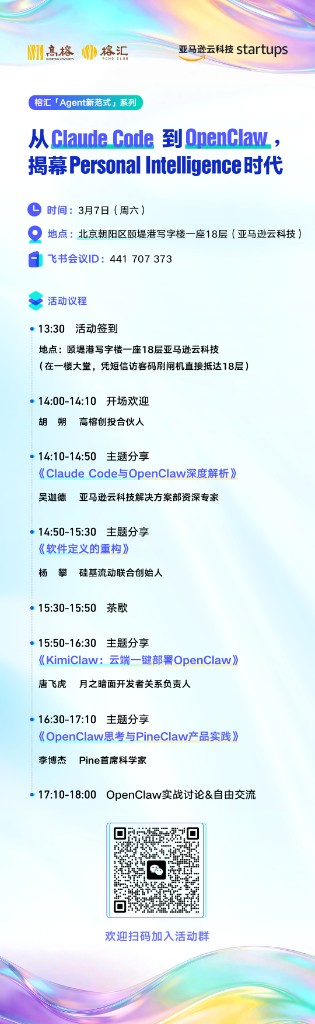

On March 7, 2026, the Gaorong Ronghui “New Agent Paradigm” series event was held at AWS in Beijing, with the theme “From Claude Code to OpenClaw: Unveiling the Era of Personal Intelligence.” Guests from teams including AWS, SiliconFlow, Moonshot AI, Pine AI and others were invited to share in depth around the OpenClaw ecosystem. As the last speaker, I gave a talk titled “OpenClaw Thinking and PineClaw Product Practice.”

View Slides (HTML), Download PDF Version

This talk is divided into two parts. The first part is my thinking about OpenClaw—what inspiration and limitations OpenClaw brings to the AI Agent space; the second part is PineClaw’s product practice—what Pine AI is, and how we open up its capabilities to the OpenClaw ecosystem.

Part One: Thinking About OpenClaw

Sovereign Agents: Redefining Freedom and Responsibility

I think there are two core reasons why OpenClaw has taken off.

The first is its rich set of connectors. OpenClaw can be controlled through all kinds of channels—Telegram, iMessage, WhatsApp, etc. As long as you connect the corresponding channel, you can send it messages. It’s like when I did the live demo: I could control the slides with my phone, laptop keyboard, or clicker; no matter what I used, it could receive the signals. Before OpenClaw, many Agents simply didn’t have such rich connectivity.

The second is sovereign autonomy. This is also why many previous Agents never really took off, but OpenClaw did. OpenClaw gives users full autonomy—whether it’s Manus or OpenAI’s Agents, they are enterprise‑grade, closed Agents: you have to pay, you don’t see the code, and you don’t control the data. OpenClaw is fully open; you can tinker with it however you like.

Of course, this also means sacrificing security. The logic is exactly the same as Web3—you keep your coins in your own wallet, and you don’t have to worry about the bank freezing your account one day, but if your computer has a vulnerability and gets hacked, the coins are gone in an instant and there’s no one to blame. This is the “Code is Law” logic—you bear all the risk yourself, but you also own all the freedom.

My personal view is: OpenClaw will definitely attract a certain group of users, but in the long run, I think maybe 99% of people on Earth will still end up using enterprise‑grade, professional Agents—someone else is responsible for security, but you sacrifice some freedom. This is the same reason why 99% of people in the world still keep their money in banks instead of putting everything into crypto—most people don’t understand technology, don’t have the ability to ensure their own security, and need someone else to provide stronger guarantees.

Coding Agents Are the Core of All General Agents

Another big insight OpenClaw brings us is: the ultimate core of every general Agent is a Coding Agent.

I think the first to really push this concept in the industry was Manus. At the time, many people didn’t quite get it—wasn’t it just putting the three major Agent benchmarks (Coding Agent, Deep Research, Computer Use) together, and then using a file system (Virtual File System) as the core of the Agent, where everything stores memory, task lists, and intermediate data from computer operations through reading and writing files?

Many people didn’t get it then, but there’s actually a very deep insight behind this. Since the second half of last year, Anthropic officially has also been pushing this idea of using a file system as the core. Think about why people started talking about “Skills” in the second half of last year—in fact we were already doing this internally in the first half, we just didn’t call them Skills. We would put the Agent’s capabilities into files, and let the Agent access these Skills through generic file read/write.

More importantly, the Agent has the ability to write files; it can self‑evolve by writing to files. For example: the first time you ask it to handle a certain task, the other side tells you that you need materials A, B, and C, but you only prepared A and B at first. Then it will record a note in the knowledge base—next time we handle this task, remember to ask the user for material C. This is a process of accumulating domain knowledge as the Agent runs.

Say you ask it to call Bank of America to do something. What will they ask to verify your identity? Some ask your birthday, some your card number, some the last four digits of your credit card. If you ask any model, it can probably guess roughly, but it’s hard to be precise. And this information changes over time. So this ability to self‑evolve as the Agent runs is crucial, and the simplest way is through a file system.

By now it’s even clearer—at the core of OpenClaw is a small open‑source Agent, and it’s essentially a Coding Agent. And the tools everyone uses a lot now like Cursor, Claude Code, etc., all follow this same logic.

Large Models as the New Operating System

From this perspective, the large model itself is actually a new operating system. My own background is in infrastructure—high‑performance data center networking and systems, dealing with operating systems every day. In OS academic research there are two top conferences: SOSP (Operating Systems Principles) and OSDI (Operating Systems Design and Implementation). But now, large models have become a new OS.

What does that mean? Previously, you had many applications running on an OS; some used large models, some didn’t. But from now on, model costs are getting lower and lower—for example, models like Kimi are pretty powerful and quite cheap—and in the future, almost every interaction may ultimately be with a large model. In the end, applications may just be a bunch of Skills plus external Channels that interact with the model in this way.

The Ultimate Realization of Conceptual Integrity

Once models become the new OS, combined with the high programming efficiency that Agents themselves provide, software development will undergo a big transformation.

Anyone who writes software knows about “The Mythical Man‑Month”—adding more people to a late project only makes it slower because communication costs go up. The root cause of high communication costs is insufficient conceptual integrity. Historically, good software projects—Git, Linux—were all started by a single architect who built the original prototype and designed an architecture that is easy to extend and stable. Then it could be expanded gradually; even 1,000 or 1,000,000 contributors are manageable.

Why have only super projects like Linux traditionally been able to achieve this? Because most people’s coding efficiency is too low. Linus could hack on the Linux kernel alone for a few months and get it working, but if you randomly pick a few people, forbid them from using any LLM tools, and ask them to create a kernel from scratch—even in 2026 I think most people still can’t.

But AI is different. Recently, Anthropic did an experiment—let AI build a C compiler. In two weeks, with around $20k in token cost, it autonomously launched 2,000 Claude Code sessions, and in the end produced a complete C compiler that can compile a Linux kernel. Of course the software engineering quality wasn’t great—but that’s because Anthropic’s engineers, in order to demonstrate capability, deliberately wrote a very simple prompt: just “write a compiler.” In a product‑grade development setting, you would first produce design docs, review the architecture, specify that the compiler optimizations must be correct and pass the LLVM test suite, etc. If you draw the boundaries clearly, I’m confident that if you let Claude Code run for two weeks straight, the outcome would be much better than what Anthropic publicly showed.

So in our company now, we almost never see frontend, backend, and OS arguing and wasting an afternoon in meetings. It’s basically: state what you want to implement, then do it, then open a PR, review it, and if it’s fine you merge and it’s done. With higher development efficiency, the system itself can better reflect conceptual integrity.

Context Is Humanity’s Moat

From a software development perspective, this also addresses the context problem in team collaboration. I’ve always cared a lot about context. Everyone agrees that model intelligence is improving; the biggest problem for applying models in real‑world scenarios is context.

Many people worry about being replaced by AI. I have three thoughts:

First, AI does not know the implicit constraints and design goals behind requirements. Some things come out of casual conversations at dinner; if you don’t have that context, AI won’t know. To address this, I always carry a Limitless AI recording device—it records 24/7, and I turn it on whenever appropriate. Even this PPT was generated by AI based on my context—there isn’t a single word in the slides I typed myself; it’s all AI‑generated. But it’s not random; it’s basically all things I’ve said before. The key is giving it the right context.

Second, there are many traps in code that exist for historical reasons. Any production project with more than 100k lines will have a ton of questionable design, but if these historical reasons aren’t documented, AI has no way of knowing.

Third, humans have many thoughts that have never been expressed. Even if you record every interaction, AI still doesn’t know the intentions in your head that you never verbalized. That’s why AI can’t completely replace humans—because there will always be ideas that live only in your mind.

So what can AI replace? It can replace people who have no opinions, no judgment, and merely execute tasks passed down from above.

Two days ago I dug up a paper that had been rejected by a top conference three years ago. At the time the experimental results weren’t very promising so it got rejected; later my collaborators all left, and I didn’t have time to work on it. I suddenly remembered it and asked Claude Code to help me. I opened Claude Code, used our own tools like Raft Loop, let it run for two days straight, and it finished all the experiments for that paper. I submitted it last night. Without AI, if I had done it myself back then, it probably would have taken six months. Why has no one else published something similar in the three years since? The key is context—other people wouldn’t even think of this problem.

The Future Transformation of the Software Development Industry

Software development may shift from a labor‑intensive to a knowledge‑intensive industry. I think in the future we’ll need three kinds of people:

Film‑director type (0‑to‑1): For example, I want to build a product today—what should it look like, how do I get a prototype working.

City‑planner type (1‑to‑100): When the system grows large, someone needs to design the architecture, ensure it can keep evolving, and make security and performance robust.

F1‑racer type (pushing the limits): For example, algorithm engineers working at the frontier of models. Everyone now knows continual learning and online learning are crucial, and frontier labs around the world are competing on these problems.

All three are relatively elite roles. Those who don’t fit into any of these categories may not be very necessary in the future. It’s like competitive sports—I used to work at Huawei, and there were two teammates who came from elite athletics; they had been national champions in the 110m hurdles and 400m, really impressive. But they ended up at Huawei writing code because they felt they couldn’t win national or international titles, so they couldn’t keep going in that field and would have to become coaches, which they found less interesting than coding. In the future, programming might be similar—if you don’t reach a certain level, someone who knows what they’re doing can just use AI to get the job done.

Moravec’s Paradox: The Biggest Bottleneck of AI Coding

Whether you’re using OpenClaw or other AI coding tools, the biggest bottleneck actually lies in Moravec’s paradox—things that are hard for humans may be easy for AI; but things that are easy for humans are often hard for AI.

Most of my time every day is split in half between giving requirements to AI and doing architecture reviews—I say what I want to do, it does it, halfway through it reports its design plan to me, I look at it and say there are problems in A, B, and C and it should be done this way instead, then let it revise. This is much faster than working with humans—back at big companies I’d sit in the office and have a few employees report progress, then have another meeting a week later. Now it basically takes just an hour to get to version two.

The annoying part is that you have to do a lot of secretary and testing work for the Agent. Because most of the world is still designed for humans, not AI. For example, I need to register a WeChat burner account for testing—what AI can do that? WeChat doesn’t want AI operating it. Or I need to configure a third‑party app’s API key; often Claude Code gets halfway and says, “Now please open this website, apply for an API key, and follow the steps,” so I spend 20 minutes finishing that, produce an API key for it, and then it can continue.

Testing too—the Agent says it’s done, you click a button and there’s an error. The root cause is that Computer Use capability is still quite limited. Although Computer Use Agents are already close to humans on benchmarks, cost, latency, and stability are still behind humans. If one day Computer Use solves those three issues, a lot of things in the virtual world will suddenly open up for Agents.

Moltbook: 1.5 Million Agents Spontaneously Evolving a Civilization

There’s another fascinating phenomenon in OpenClaw—Moltbook. It has many Agents that have developed their own autonomous culture, even creating a digital religion. Of course these Agents haven’t developed self‑awareness; humans set their intents, and then they spontaneously evolved collaboration protocols.

There’s also RentAHuman—AI uses cryptocurrency to hire real people. But right now the platform is still experimental and many fundamental issues are unresolved, such as: if you pay $50 and the person doesn’t do the work, how do you handle dispute resolution? How do you avoid bad actors driving out good ones? These are all things a real platform must solve. But I believe someone will eventually make this work for real.

Four Design Inspirations from OpenClaw

Looking at OpenClaw’s design, there are four major inspirations:

First, a pure natural‑language interaction interface. Especially the authentication flow—for example, to install the Pine Voice plugin you just type openclaw plugin install openclaw-pine-voice, then talk to the Agent to complete setup. It asks for your email address, sends a verification code to your inbox, you enter the code, restart the gateway, and you’re done. If anything goes wrong during authentication, it never gets stuck—for instance, if you forget to restart the gateway or mistype the code, the AI is smart enough to guide you through fixing the problem. It’s like hiring a human assistant, using conversation to complete authentication instead of clicking around GUI forms.

Second, self‑chat to connect to messaging platforms. Many platforms don’t welcome bots, such as WhatsApp. OpenClaw uses self‑chat—the Agent logs into your personal account and sends messages to and from yourself, bypassing the platform’s bot API restrictions.

Third, no‑session design. When people use ChatGPT or other products they usually have many sessions, but OpenClaw treats you like a real person with infinite context. Users don’t need to care about the concept of sessions. If AI capabilities are strong enough, it can solve many cross‑session problems.

Fourth, Skills + CLI to access third‑party services. As long as there’s a CLI, it can directly access third‑party services without needing a separate plugin for each one.

The Fundamental Limitation of OpenClaw: The Personal Assistant Paradigm

While building PineClaw, I also discovered OpenClaw’s fundamental limitation—it follows a Personal Assistant paradigm. OpenClaw’s founder said this himself: the design pattern is one person and multiple agents (he even coined the term “Polyagent”), where a user has one Agent instance, the Agent only serves one person, and cannot handle interactions among multiple people.

When we built Pine’s OpenClaw plugin, we ran into two issues:

First, Pine is a “person” rather than a “tool.” When Pine negotiates a bill for you, if the customer service rep finishes and reports the result, Pine needs to proactively notify the user. If you only make it a Skill, this can’t be done. So we had to make Pine into a Channel—so that OpenClaw thinks of Pine as someone it can talk to at any time, like Telegram or WhatsApp.

But after installation, OpenClaw frequently got confused—it would sometimes mix up the user and Pine, saying things meant for Pine to the user, and things meant for the user to Pine. We submitted a PR to OpenClaw, but it was closed. Later the OpenClaw founder posted on Twitter that OpenClaw’s design philosophy is one person with multiple Agents, and they will never consider multi‑person scenarios.

Second, the real world has multiple participants. For example, in Pine’s bill negotiation there are the user and the merchant, whose interests conflict. You can’t mix them up, and you can’t just be a passive relay; you have to be able to broker between them. More complex examples include: hospital bill negotiations that involve the user, the hospital, and the insurance company; hotel sanitation complaints that involve the user, the hotel, and the government Health Department—we had a real case where a user complained that a hotel’s hygiene made them sick, the hotel refused to compensate, we collected evidence and reported it to the Health Department, and the government investigated and directly shut the hotel down.

All these scenarios require an Agent that can manage communication among multiple people—and these people are not APIs. You can’t treat them like Skills that you call when needed and ignore when not; they can contact you at any time and you must be able to respond.

So we’ll probably build a similar framework ourselves—one that supports multiple people, handles multi‑party adversarial interactions, and is event‑driven rather than polling‑based.

Security: The Three Fatal Factors

People have also mentioned OpenClaw’s security issues. There’s a concept of the “three fatal factors”: ① access to the user’s private data; ② exposure to untrusted external content; ③ the ability to communicate externally. Pine happens to have all three. Add persistent memory—private data, untrusted content, external communication, and persistent memory together—and you get a very high‑risk environment.

So we did a huge amount of work to ensure security. This is also why using OpenClaw alone externally doesn’t give you these security features—for example, how do you guarantee it won’t suddenly delete all your emails, or send weird emails to your investors at 3 a.m.? These are things that require extensive engineering effort over time.

Coming back to my initial point: for most people, you probably still need commercial products to handle high‑risk, sensitive tasks; but for the top 1% of geeks, a fully open approach like OpenClaw is more suitable—powerful enough, and you bear all the risk yourself.

Part Two: PineClaw Product Practice

Pine AI: End‑to‑End Handling of Real‑World Tasks

Pine AI is the world’s first consumer‑grade AI agent that charges based on outcomes. You tell it what you want done, and it automatically does research, gathers information, designs a strategy, and then executes.

Regarding the “pay for results” model—Pine doesn’t charge by tokens, API calls, or call duration. If a bill negotiation succeeds, Pine takes a cut of the savings; if it fails, the user pays nothing. That way Pine’s incentives are fully aligned with the user—Pine has to truly solve the problem to earn money. We’ve already saved users tens of millions of dollars in total.

This model also sets a high bar internally: first, the success rate must be high, otherwise outcome‑based pricing loses money; second, end‑to‑end execution faces the security issues of the three fatal factors; third, the consequences of actions are irreversible—if you mess up a call to the bank, the money could be gone.

Two Essential Characteristics of Pine

First, fully async + event‑driven. People are not APIs. In real‑world tasks you need repeated interaction—Pine may come back at any time to ask for information, report progress, or request a verification code. This isn’t a one‑off tool invocation, but an asynchronous collaboration that lasts hours or even days. Most OpenClaw plugins rely on polling—either checking progress every few hours or waiting for the user to ask. Pine, however, is event‑driven—whenever the other party sends a message, it’s pushed in real time, which is far more efficient.

Second, contacting multiple parties at the same time, which may be adversarial. In bill negotiation, Pine connects to both the user and customer service at the same time—the user wants to save money, the customer service wants to keep profit. Pine negotiates in the middle. More complex scenarios involve three or even more parties.

PineClaw: Open to the Agent ecosystem

Pine AI has powerful voice call capabilities—human-like voice, calls lasting up to an hour, automatic IVR navigation. This made us think: can we open this capability so that OpenClaw users can also use Pine to make phone calls? That’s how PineClaw was born.

I spent five days defining the external interfaces, building a whole bunch of libraries, and basically open-sourced most of them— including OpenClaw Plugin, ClawHub Skills, MCP Server, Python SDK, CLI, all of these are now available.

Two usage modes:

Use the Pine product directly—if you’re not working in AI, you can just use Pine Assistant; the experience is exactly the same as the Pine product. Just describe what you want to do, and it will confirm with you step by step. For example, when booking a restaurant it will ask how many people, what time, and then automatically call to make the reservation.

Use Pine Voice to make calls directly—suitable for enterprise scenarios such as interviews, user interviews, user research, telemarketing, etc. Once you prepare the content and the goal, Pine Voice can make the calls for you.

Integration options are also very rich:

| Integration method | Description |

|---|---|

| OpenClaw Channel | openclaw-pine, Socket.IO persistent bidirectional connection |

| MCP Server | Remote HTTP (Voice) / Local uvx (Assistant) |

| Python SDK | pine-voice / pine-assistant |

| JS/TS SDK | pine-voice / pine-assistant |

| CLI | pineai-cli, one command covering all capabilities |

You can try it directly via pancloud.com, or add the MCP Server into Cursor, or plug it into OpenClaw, or integrate it into your own Agent via CLI and SDK.

Why openclaw-pine is a Channel instead of a Skill

There are deeper reasons behind this architectural decision. If it were implemented as a Skill, the Agent would call a CLI command and then poll for results—during the wait, the LLM would keep consuming tokens, and time-sensitive events (OTP codes, three-way calls) might be missed.

Using a Channel, on the other hand, a persistent bidirectional connection is maintained via Socket.IO—Pine events (forms, questions, call progress, OTP requests) are pushed to the Agent in real time, and the Agent’s replies reach Pine in real time, with zero LLM cost while waiting.

We spent a lot of effort making OpenClaw correctly handle events from different channels—when an Agent is chatting on WhatsApp, handling Pine bill-negotiation events, and responding to Telegram messages at the same time, precise event routing and session isolation are required.

Conclusion

OpenClaw gives Agents “hands”—to control computers, read and write files, execute code. Pine AI provides a “mouth”—to make phone calls, negotiate, and coordinate multi-party interests. PineClaw opens this mouth to the entire Agent ecosystem. When hands and mouth combine, Agents are no longer confined to the screen but truly reach into the real world.

In the future, we’ll also bring some of OpenClaw’s capabilities into Pine’s products and build more extensions. If you’re interested, you’re welcome to try PineClaw.

Event poster

Event poster

On-site sharing

On-site sharing